Azure Pre Deployment Requirements¶

Requirements to set up DataForge in a Microsoft Azure environment.

Create a Service Email Account/Distribution list¶

Create a Distribution Group or Microsoft 365 group for all 3rd party account sign ups (example: dataforgeservice@customer.com).

- DataForge uses as many native Azure services as possible, but some 3rd party vendors are used to allow for easier customization per client, and simplified operations for version upgrades/rollback

- This generic email account will be used to sign up for the accounts below (i.e. Terraform, Github, etc) so you will need email access for this account.

Create Azure Environment¶

Use a service account not tied to any individual. The account must be able to create Active Directory resources.

Upgrade to Pay-As-You-Go and create a dedicated subscription (or use an existing one). The $29/mo Azure support plan is recommended — quotas may need to be raised for new accounts.

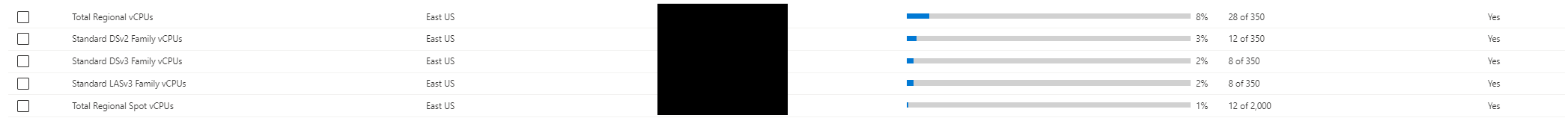

Raise the following quotas to at least 50–100 (LASv3 can be 10–20, as it's only used during Terraform runs):

Total Regional vCPUs, Standard DVs2 Family vCPUs, Standard DSv3 Family vCPUs, Standard LASv3 Family CPUs, Total Regional Spot vCPUs

Total Regional vCPUs, Standard DVs2 Family vCPUs, Standard DSv3 Family vCPUs, Standard LASv3 Family CPUs, Total Regional Spot vCPUs

New Azure accounts may have VM creation blocked. Try creating a VM after setting up the Subscription — if it fails, follow this process before deploying DataForge: https://docs.microsoft.com/en-us/troubleshoot/azure/general/region-access-request-process

If creating a brand new Azure account is necessary, we strongly recommend doing these steps 1-2 weeks in advance of the deployment

Decide on Public or Private Endpoint Architecture¶

Public Endpoints

-

UI/API will be accessible on public internet, secured with Auth0 for authentication and SSL certificate for HTTPS

-

On-prem source systems can use Agent to bypass firewall and VPN tunneling to stream data into platform

Private Endpoints

-

UI will be accessed through private VM that is deployed in the DataForge VNet, connections to the VM will be made using Azure Bastion

-

API is not publicly exposed

- Agent can only access networks that can be VNet Peered to DataForge VNet

Please reach out to DataForge team for diagrams of both architectures

Define DNS Names and Process for Managing Records¶

- One name for UI and one for API

- EX: prod.dataforge.com and api.prod.dataforge.com

- Delegate subdomain or create DNS records in DNS provider

- GoDaddy recommended if there is no DNS provider currently being used

SSL Certificate¶

A valid SSL certificate that the client organization controls to perform secure connect and termination for DataForge websites. Select from the following:

- Use an existing certificate and define a subdomain allocated to DataForge.

- Purchase a new SSL certificate for a new domain or subdomain.

- An Azure partner is Digicert.com, GoDaddy is recommended if DNS is set up using GoDaddy

- Deployment requires either a wildcard certificate or two single domain certificates per environment.

- Certificate must cover the DNS names defined in the previous step!

- After purchase is complete, verify ownership of the domain to receive the certificate. This is a requirement for deployment.

Create a Docker Hub Account¶

Create a Docker Hub account (free edition, service account recommended). Share the Docker username with the DataForge team during initial deployment.

Create an Auth0 Account¶

Create if one does not already exist with the following guidance:

- We recommend this account is not tied to an employee

- https://auth0.com/

- Auth0 tier should be a minimum of free tier, but “Developer” ($23/month) with external users, 100 external active users, and 1,000 Machine to Machine tokens is recommended if deploying more than one environment

- Create an account for the DataForge deployment team

Create a Terraform Cloud Account¶

- We recommend this account is not tied to an employee

- https://www.terraform.io/

- This will be used to manage the terraform deployment in the cloud

- The free edition will suffice for DataForge infrastructure.

Create a GitHub Account¶

Create a GitHub account. This will allow for access to the DataForge source code.

Share the Github Account Username and Email Address with DataForge Team Members and Create the Fork¶

Share the GitHub username and email with the DataForge team — they'll grant read access to the DataForge repositories. Then follow this guide to fork the repository and set up the Terraform VCS provider.

Sign up for DataForge Subscription and Support¶

Use the following link to choose a support agreement with DataForge and enter your company and payment information for billing: https://checkout.dataops.intellio.wmp.com

This agreement provides you access to the DataForge platform, platform version upgrades, and ongoing product support.

Choose VPN (Optional, can be done later)¶

The VPN must be deployable into an Azure VNET; OpenVPN can be deployed into the DataForge environment.

Set Terraform Variable Parameters¶

| variable | example | description |

|---|---|---|

| vpcCidrBlock | 10.0 | Enter the first two digits for the VPC’s /16 CIDR block. Example: 10.1 Please avoid using any Address ranges listed in the Azure Restrictions section here. |

| dockerUsername | wmp | Docker username for account that will have access to WMPDockerhub |

| dockerPassword | Password for above account | |

| RDSmasterpassword | Administrative Password for the RDS Postgres Database. Use any printable ASCII character except /, double quotes, or @. | |

| auth0ClientId | 384u3kddxj112j3 | Client Id of Auth0 account’s Management API application |

| environment | AzureDev | The environment to be deployed. This is prepended to all resource names Ex: Dev |

| auth0ClientSecret | s09df098ds0f8s0d8f0sd98f0s | Client secret of Auth0 account’s Management API application |

| auth0Domain | wmpdemo.auth0.com | Domain of the Auth0 account |

| client | orgname | Client name. This is postpended to all resource names. Ex: WMP |

| clientSecret | c6fxxxxxbf1-axxa-43d1-axx8-c50669xxxxef | Azure client secret from user/app authenticating deploy |

| clientId | c6fxxxxxbf1-axxa-43d1-axx8-c50669xxxxef | Azure client ID from user/app authenticating deploy |

| dnsZone | azure.wmpdemo.com | Base URL for the wildcard cert |

| region | East US | Azure region to deploy the environment to |

| tenantId | c6fxxxxxbf1-axxa-43d1-axx8-c50669xxxxef | Azure tenant ID |

| subscriptionId | c6fxxxxxbf1-axxa-43d1-axx8-c50669xxxxef | Azure Subscription ID |

| cert | Contents of the SSL certificate - see instructions below | |

| certPassword | Password to PFX certificate file, only required this if working with password protected PFX | |

| imageVersion | 5.2.0 | Deployment version for the platform |

| readOnlyUsername | readonly | Username for Postgres read only user |

| readOnlyPassword | xxxxx | Password for Postgres read only user |

| sparkVersion | 7.3.x-scala2.12 | Default Databricks spark runtime |

| instanceType | m5n.large | Default Databricks cluster Instance type |

| miniSparkyAutoTermination | 120 | Auto termination for mini-sparky in minutes |

| usageAuth0Secret | xxxx | Auth0 secret for usage collection - provided by DataForge team during deployment |

| usagePassword | xxxxxxx | Alphanumeric password for usage user, should be autogenerated |

| vmUsername | dataforgeadmin | Username for admin account of Bastion connected VM |

| vmPassword | xxxxx | Password for admin account of Bastion connected VM |

| vmName | dataforge-vm | Custom VM name for private DataForge access. 16 character limit |

Optional variables for tuning ECS container sizes:

| Variable | Example | Description |

|---|---|---|

| apiCPU | 2 | CPU value for API ECS container (Cores) |

| apiMemory | 4 | Memory value for API ECS container (GB) |

| coreCPU | 2 | CPU value for Core ECS container (Cores) |

| coreMemory | 4 | Memory value for Core ECS container (GB) |

| agentCPU | 1 | CPU value for Agent ECS container (Cores) |

| agentMemory | 2 | Memory value for Agent ECS container (GB) |

If running a non-public facing deployment - these variables will need to be added:

| Variable | Example | Description |

|---|---|---|

| publicFacing | no | Triggers the infrastructure to deploy non-public facing resources |

SSL Certificate Contents¶

The "cert" variable will need the SSL certificate contents in Base 64 encoding, so it can be saved as a text variable. To do this, you will need to download the certificate in .pfx format. If the certificate isn't already in .pfx format, make sure that private key for the certificate is on hand so the certificate can be easily converted to pfx. Once the certificate is downloaded, run these two commands in Windows Powershell (change the values in the first line to point to the pfx file on your local system):

$fileContentBytes = get-content 'C:\

[System.Convert]::ToBase64String($fileContentBytes) | Out-File 'pfx-encoded-bytes.txt'

Then, open pfx-encoded-bytes.txt and save the contents of the file into the "cert" variable in Terraform. If the certificate is password protected, add the "certPassword" variable to Terraform and use the certificate password as the value.

Next Steps¶

Once all of the prerequisites are complete, and the variables have been figured out, navigate to the Performing the Deployment guide to begin deploying DataForge resources.

Verify the deployment¶

Once DataForge is up and running, the Data Integration Example in the Getting Started Guide can be followed to verify that the full DataForge stack is working correctly.